UNESCO Report Exposes Gender Bias in Leading AI Models

In March, UNESCO published a new study revealing alarming evidence of regressive bias against girls and women within Large Language models (LLMs), such as GPT-3.5 and GPT-2 by OpenAI and Llama 2 by META.

Key Findings:

- Gender Stereotyping: Open-source models like Llama 2 and GPT-2 commonly associate men with more diverse, high-status careers, such as engineers or doctors. In contrast, women are frequently tied to undervalued or stigmatized roles such as “domestic servant,” “cook” or “prostitute.”

- Sexist Language: When tasked with completing sentences that start with a person’s gender, about 20% of responses from Llama 2 exhibited sexist and misogynistic attitudes, including portrayals of women as sex objects and property of their husbands.

- Bias Against LGBTQ+ Individuals: Both models often generate negative portrayals of LGBTQ+ individuals.

The study found the most significant gender bias in open-source LLMs like Llama 2 and GPT-2, which are both prized for being free and easily accessible. These models’ openness could serve as a vital tool for addressing and reducing biases through global collaborative efforts, unlike more proprietary models like GPT 3.5 and Google’s Gemini.

The study highlights the importance of collaboration among various stakeholders, including technologists, civil society, and affected communities, in policymaking. This collaborative approach ensures that a wide range of perspectives are taken into account, which in turn leads to the development of AI systems that are fairer and less likely to cause harm.

When AI systems are fed gender-balanced information,

they are more likely to form a comprehensive worldview,

leading to fairer and more accurate outcomes.

Recommendations for Action:

- Policy Measures: Governments and policymakers should introduce regulations mandating transparency in the AI algorithms and datasets they employ, ensuring biases are detected and amended. Policies that prevent introducing or perpetuating biases during data collection and algorithm training are also critical.

- Technological Interventions: AI developers should prioritize the creation of diverse and inclusive training datasets to mitigate gender bias at the outset of the AI development cycle. Moreover, integrating bias mitigation tools within AI applications will enable users to report biases and contribute to ongoing model refinement.

- Educational and Awareness Initiatives: It’s vital to conduct education and awareness campaigns to sensitize developers, users, and stakeholders to the nuances of gender bias in AI. Collaborative efforts to set industry standards for mitigating gender bias and engaging with regulatory bodies are essential to extend these efforts beyond individual companies.

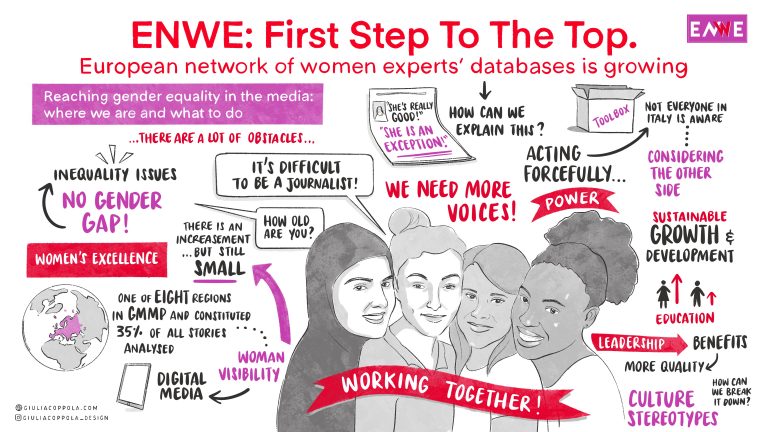

ENWE’s Impact on the Evolution of AI

As highlighted in the study, inclusive media content is crucial to counteract biased AI training.

For example, LLMs trained on stories that predominantly quote male experts in science might incorrectly conclude that female experts in this field are less credible or noteworthy. This can affect AI-driven news aggregators or search engines, leading them to amplify male-dominated perspectives further and underrepresent female expertise.

Conversely, when AI systems are fed gender-balanced information, they are more likely to form a comprehensive worldview, leading to fairer and more accurate outcomes.

That is why our initiatives – from our network of European databases of women experts to our monthly “Ask Women” newsletter highlighting prominent women professionals – not only promote gender parity in the media but also foster more gender-balanced coverage that, in turn, leads to more equitable and accurate AI outcomes.

You can read the entire UNESCO report at this link.

ENWE – European Network for Women Excellence is an advocacy group committed to creating a network of European databases that offer an extensive selection of prestigious female profiles for interviews, conferences, and panels. Find out more about our network of partners here.